In today’s world Ireland is famous for vibrant cities, cosy pubs and cold Guinness but, in a simpler time – before us humans got involved, it was once the land of giant deer, grey wolves and grizzly bears. Although, some of these animals can be seen elsewhere, a few sadly cannot and were never seen again. Here I provide you five of the coolest animals that ever roamed the Emerald Isle.

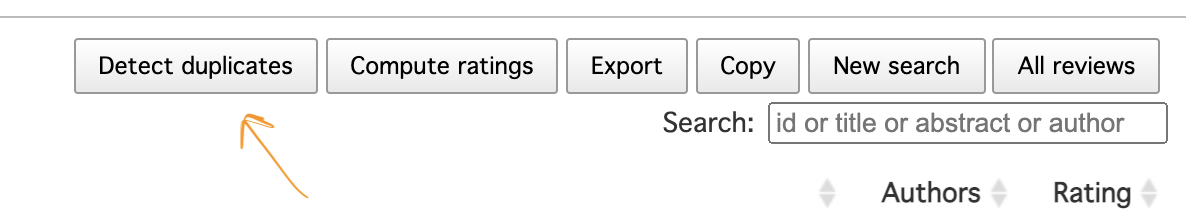

| Number 1 – The Great Auk Despite being coined the original penguin, great auks were not actually penguins at all but a fine product of convergent evolution. Ironically, the Latin name for the great auk is Pinguinus impennis, and when European explorers found the first penguins in the southern hemisphere, they noticed their uncanny resemblance to the great auk and hence we have modern name for penguins. The great auk had a white belly and a black back, stood around 85cm tall and weighed around 5 kgs. It had small wings for swimming and a large beak for eating fish and krill. The great auk was once a common sight along the Irish coastlines with remains being found in popular tourist spots in Donegal and Galway. Much like penguins, the great auk was utterly defenceless on land which unfortunately contributed to their eventual demise in the 1840’s due to widespread hunting for food and bait. |

| Number 2 – The Irish lynx Currently, lynxes (or, bobcats) are mainly found across Siberia and North America, but these majestic wildcats were once widespread across the island of Ireland. The presence of Lynx in Ireland wasn’t known until the late 1930’s, when a few hikers found a mandible bone in County Waterford. It’s likely that the Irish lynx roamed the woods and countryside, preying on small deer and hare. Lynxes are known to have survived in the British Isles until the Romans arrived however, there is no indication of when they went extinct in Ireland. Recently, lynx have been considered for a reintroduction project, helping to balance woodland ecosystems and increase biodiversity. The aim is that the introduction of the native lynx will reduce numbers of invasive sika deer, which currently have no natural predator. |

| Number 3 – The Irish Wolf Wolves were a major part of the postglacial fauna in Ireland dating back as long ago as 34,000 BC. The Irish word for wolf is Mac Tíre which means “Son of the Countryside”, which illustrates how important wolves were to the people of Ireland. In fact, many Irish stories, myths and folklore are about wolves and how the Irish gods adored them. Before the great agricultural revolution on the island most of the countryside was clothed with thick forest, which was perfect hunting habitat for wolves. It wasn’t until the arrival of Oliver Cromwell in 1650’s that wolves in Ireland became troubled. Cromwell wanted rid of the wolves in Ireland and shockingly ordered a mass culling of all wolves offering £5 for a male, £6 for a female and 40 shillings for a cub. Unfortunately, the number of wolves began to plummet and the last wolf in Ireland was killed in 1786 in County Carlow. Today, there are only a few reminders of the existence of wolves in Ireland through ring forts that were once used to protect sheep, place names and the great Irish Wolfhound. |

| Number 4 - Irish Bear For thousands of years brown bears roamed Ireland, preying on deer and fishing in streams for salmon. Much like modern bears in North America, Irish bears hibernated in caves over the long winter months. Amazingly, scientists have revealed DNA evidence that suggests that the Irish bear is the maternal ancestor of Polar bear, which conflicted the previous opinion that North American bears were the ancestors. Additionally, it is thought that the two species may have mated opportunistically during the last 100,000 years which means that they must have interacted during the last ice age. Unfortunately, the Irish brown bear went extinct around 2,500 years ago mostly due to great deforestation and hunting in Ireland. There is a famous Irish myth about a sleeping bear god who will rise from hibernation and come to the aid of their people when called. The summoners of the bear god were called the Mahon’s, the son of the bears. Ironically, the Mahons later became the McMahons which is now a common surname around the world. Today, all that remains to remember the Irish bears are a few sculptures and a Guinness poster. |

| Number 5 – The Irish Elk Megaloceros giganteus, the Irish elk, is one of the largest deer that ever lived. It stood at seven feet tall at the shoulder and its antlers spanned an impressive 12 feet wide. Their enormous antlers are thought to be due to sexual selection, a trait to impress females. It had long been thought that their antlers were purely for display but recently scientist have indicated that they may have also been for contests. At their largest males weighed a massive 1,500 lbs, roughly the size of the modern Alaskan moose. Strangely, the Irish elk is not an elk at all but a deer, the name was coined due to its sheer size and the original excavators believed they found the remains of an extinct species of elk. The Irish elk was not exclusive to Ireland but was named so due to their most famous and well-preserved fossils were found in peat bogs across the island. Although impressive, their wide antlers became a maladaptation, and contributed to their eventual extinction in 7,700 BC. |

Image sources:

- Auk image: https://www.bbc.com/news/science-environment-50563953

- Irish Lynx Image: https://www.breakingnews.ie/lifestyle/four-amazing-animals-that-could-be-reintroduced-to-ireland-1019270.html

- Ring fort image : https://www.amazing-grace.ie/an-grianan-of-aileach

- Irish wolves’ mythology Image: https://earthandstarryheaven.com/2015/05/13/irish-werewolves/

- Guinness Bear Image : https://commons.wikimedia.org/wiki/File:Guinness_StoreHouse,_Dublin._Advertising_Exhibit._-_geograph.org.uk_-_626611.jpg

- Elk Image: http://news.bbc.co.uk/2/hi/uk_news/northern_ireland/8316262.stm

RSS Feed

RSS Feed